Why accountability—not intelligence—will define the next phase of autonomous telecom systems.

Most telecom AI content focuses on what AI can do.

Far less attention is paid to what happens after AI starts deciding.

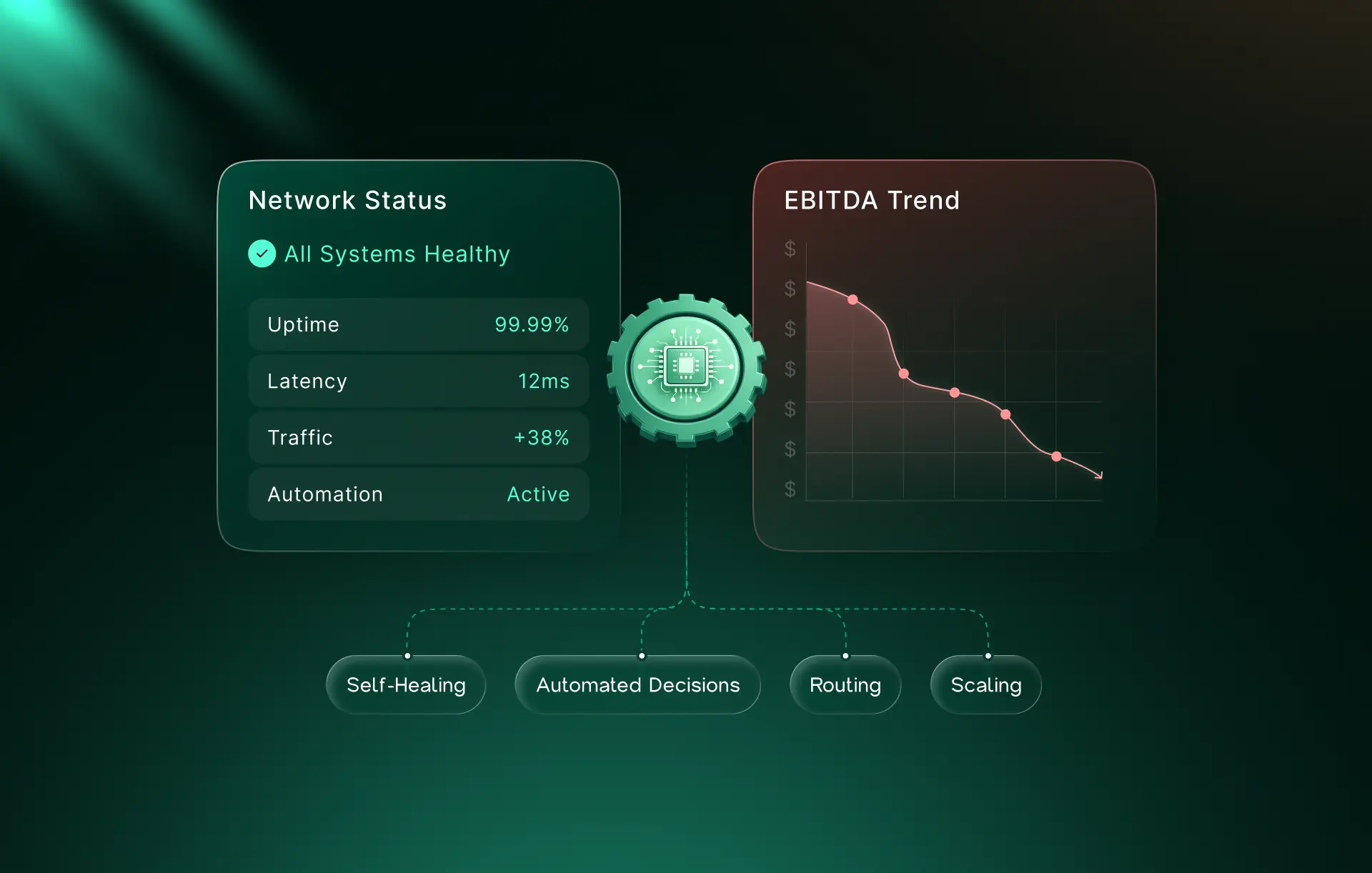

Networks are now making decisions in milliseconds—rerouting traffic, prioritizing sessions, resolving anomalies, and enforcing policies without waiting for human intervention. That shift is no longer experimental. It is operational reality.

And it introduces a new question that operators, boards, and regulators are already asking:

When the network decides, who is accountable for the outcome?

This article is not about ethics.

It is about operational risk, system design, and commercial trust.

Why AI Without Governance Becomes a Risk Multiplier?

In traditional networks, accountability was implicit.

A human configured the policy. A system executed it.

Autonomous networks break that chain.

When AI systems act faster than human oversight, responsibility doesn’t disappear—it fragments across models, policies, platforms, and teams. If a decision causes service degradation, compliance exposure, or customer impact, tracing why it happened becomes as important as correcting what happened.

Without governance, autonomy accelerates outcomes without preserving accountability. (This is the same execution gap that appears when APIs are exposed without being operationalized.)

At scale, that isn’t efficiency—it’s exposure.

What Decision Ownership Means in an AI-Driven Network?

Decision ownership in autonomous systems is often misunderstood.

It is not about:

- who trained the model,

- who deployed the software,

- or who owns the infrastructure.

It is about whether an operator can answer, after the fact, three operational questions:

What action did the network take?

Why was that action taken at that moment?

Under which policies and constraints did it occur?

If those answers require manual reconstruction or cross-team escalation, the network may be autonomous—but it is not governable.

In production environments, decision ownership must be explicit, traceable, and defensible.

Explainable vs. Auditable AI: Why the Difference Matters?

Explainability is often treated as the endpoint of AI transparency. It is not.

Explainable AI helps operators understand how a model behaves.

Auditable AI allows operators to prove why a specific decision occurred.

In telecom environments, that distinction matters. Dashboards and confidence scores are insufficient when decisions must be reviewed days later, across jurisdictions, or under regulatory scrutiny.

Auditable AI requires:

- decision lineage,

- policy context,

- reproducibility,

- and immutable records tied to network actions.

Visibility shows behavior.

Auditability defends it.

(Trust only compounds when platforms are designed as products, not internal abstractions.)

Why Governance Must Be Architectural, Not Procedural?

Many operators attempt to govern AI through process: approvals, exception workflows, post-event reviews.

That approach fails at scale.

If trust depends on humans being “in the loop,” autonomy collapses under real-time conditions. Networks operate too fast for manual governance to keep up.

Effective governance must be built into the system architecture itself—enforced through policy layers, decision boundaries, and automated controls that operate at machine speed. (This shift mirrors how modern telco stacks are evolving from reactive control to built-in adaptability.)

When governance is architectural, autonomy scales safely.

When governance is procedural, autonomy slows—or fails.

How Operators Enable Confidence Without Slowing Performance?

Governance does not require slowing networks.

It requires bounding decisions, not blocking them.

Operators preserve both performance and trust when:

- AI decisions operate within predefined policy limits,

- outcomes are logged by default, not by exception,

- escalation is automated, not manual.

This approach allows networks to move at machine speed while remaining explainable, auditable, and defensible.

Governance is not the opposite of autonomy.

It is what allows autonomy to operate at scale.

TelcoEdge Perspective

The next phase of autonomous networks will not be defined by intelligence alone.

It will be defined by whether decisions remain defensible at scale—technically, operationally, and commercially.

As networks take on greater responsibility, trust becomes a system property, not a promise. And trust, in this context, is built through architecture.

Because in autonomous telecom systems, accountability cannot be added later.

It must be designed in from the start.

Autonomy without governance accelerates risk.

Governance without architecture slows progress.

The operators who succeed will design for both.